As artificial intelligence becomes embedded in daily workflows, the challenge is no longer just generating responses—it is managing context. From chatbots and copilots to autonomous agents, AI systems rely on structured inputs, memory, user history, and task constraints to produce reliable outputs. This has given rise to a new category of tooling: context engineering software. These platforms help teams design, manage, monitor, and optimize how context flows into large language models (LLMs), ensuring predictable and scalable AI interactions.

TLDR: Context engineering software helps teams structure, manage, and optimize the information fed into AI systems. It ensures that prompts, memory, rules, and user inputs are organized and controlled for accuracy and consistency. These tools improve performance, reduce hallucinations, and enable scalable AI deployment. As organizations adopt AI more deeply, context engineering is becoming a core discipline in modern software development.

What Is Context Engineering?

Context engineering is the structured design of everything an AI model “sees” before it produces an output. While prompt engineering focuses on crafting effective instructions, context engineering goes further. It includes:

- System prompts and behavior guidelines

- User session data and historical memory

- External knowledge retrieval (documents, databases, APIs)

- Conversation state management

- Tool invocation and agent orchestration

- Safety constraints and response filters

Without structured context management, AI systems can become inconsistent, forgetful, overly verbose, or inaccurate. Context engineering software creates a controlled environment where outputs are predictable and optimized for specific tasks.

Why Context Matters in AI Interactions

Large language models operate within a limited context window—the maximum number of tokens they can process at once. As interactions grow more complex, information must be carefully curated. Too little context leads to vague results. Too much context can cause confusion, slow performance, or higher costs.

Organizations face several common challenges:

- Loss of conversation history in multi-turn dialogues

- Inconsistent responses across similar requests

- Hallucinated information due to incomplete grounding

- High API costs caused by inefficient token usage

- Difficult debugging of AI decision-making processes

Context engineering software systematically addresses these issues by structuring how information is prioritized and injected into model calls.

Core Components of Context Engineering Software

1. Prompt Management Systems

These tools allow teams to version, test, and deploy prompts in a controlled manner. Instead of hardcoding prompts into applications, developers can:

- Run A/B tests

- Track performance metrics

- Roll back changes

- Collaborate on updates

This transforms prompts into manageable assets rather than scattered text fragments.

2. Memory and State Management

Advanced AI applications rely on conversation memory. Context engineering platforms often include:

- Short-term session memory

- Long-term user profiles

- Vector database integration

- Context summarization layers

Rather than sending every previous interaction back to the model, these systems intelligently summarize or retrieve only what is relevant.

3. Retrieval-Augmented Generation (RAG)

RAG systems connect AI to structured and unstructured data sources. Context software manages:

- Document indexing

- Embedding pipelines

- Search optimization

- Citation grounding

This ensures responses are tied to authoritative data rather than probabilistic guesses.

4. Guardrails and Safety Layers

Organizations require controls to prevent policy violations or inappropriate outputs. Context engineering platforms may include:

- Input validation filters

- Output moderation layers

- Policy enforcement frameworks

- Compliance logging

These mechanisms introduce structured governance to AI interactions.

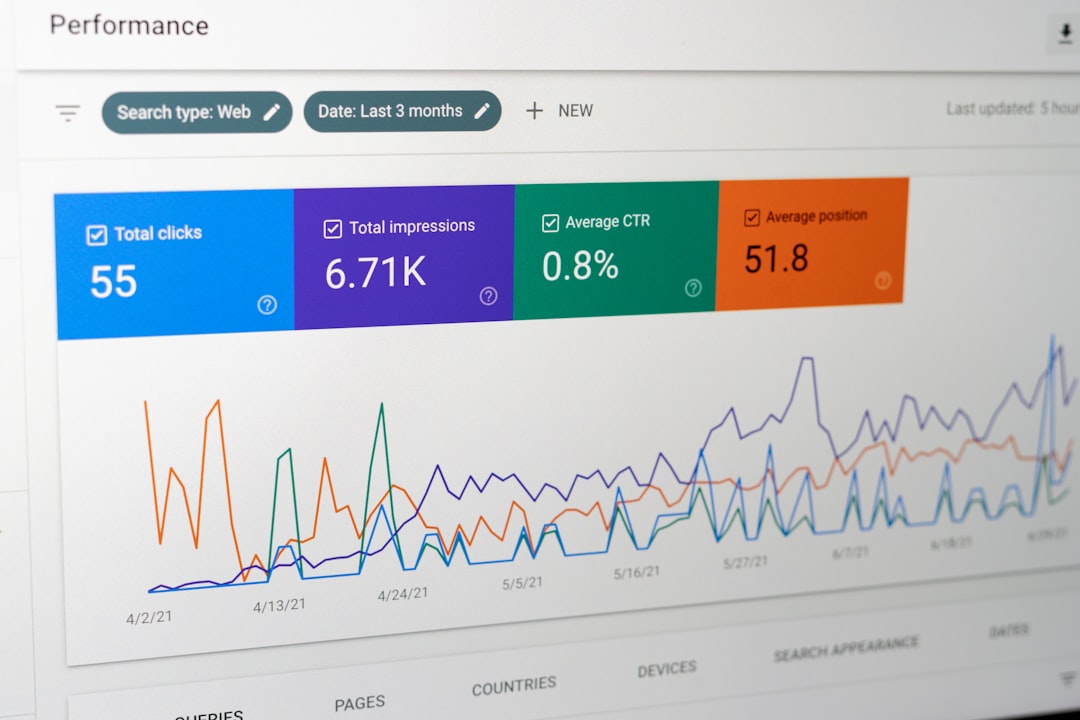

5. Observability and Analytics

AI systems cannot improve without visibility. Context tools provide:

- Token usage tracking

- Latency monitoring

- Response scoring

- Error diagnostics

This data enables continuous optimization and cost control.

Benefits of Using Context Engineering Software

Improved Output Consistency

By standardizing system prompts and structured instructions, organizations achieve predictable behavior across sessions.

Reduced Hallucinations

Grounded retrieval and memory filtering reduce unsupported or fabricated responses.

Lower Operational Costs

Smart context prioritization prevents unnecessary token overload, cutting API expenses.

Enhanced Collaboration

Context engineering platforms allow product managers, engineers, and AI specialists to collaborate on prompts and workflows within a shared system.

Scalable Deployment

Instead of building custom orchestration logic from scratch, teams leverage standardized pipelines.

Use Cases Across Industries

Customer Support Automation

AI assistants retrieve relevant knowledge base articles, recall user history, and escalate issues when necessary.

Healthcare and Legal Research

Structured document retrieval ensures answers cite verified sources rather than generating unsupported conclusions.

Enterprise Knowledge Management

Employees can query internal documents with context-aware AI systems that respect access permissions.

E-commerce Personalization

AI engines factor in browsing history, purchase patterns, and product specifications for tailored recommendations.

Leading Context Engineering Tools

Below is a simplified comparison chart of notable tools in the context engineering and LLM orchestration space.

| Tool | Primary Focus | Open Source | Key Strength | Best For |

|---|---|---|---|---|

| LangChain | LLM application orchestration | Yes | Flexible agent workflows | Developers building custom pipelines |

| LlamaIndex | Data indexing for LLMs | Yes | RAG optimization | Document-heavy applications |

| Weights and Biases | Experiment tracking | Partial | Prompt testing and analytics | AI performance monitoring |

| Humanloop | Prompt management | No | Collaborative evaluation tools | Product teams |

| Guardrails AI | Validation and safety | Yes | Output constraint enforcement | Regulated environments |

Each platform addresses different layers of context engineering. Many organizations combine multiple tools into a single orchestration stack.

Best Practices for Structuring AI Interactions

- Separate system prompts from user input

Maintain clear boundaries between behavioral instructions and user content. - Implement dynamic context selection

Retrieve only the most relevant information rather than sending complete archives. - Version-control prompts

Track changes and evaluate impact before production deployment. - Monitor token consumption

Optimize summarization strategies to control costs. - Use layered safety checks

Do not rely solely on the base model for compliance protection.

The Future of Context Engineering

As AI models grow more powerful, context engineering will evolve into a formalized discipline similar to DevOps or data engineering. Emerging trends include:

- Automated context optimization using reinforcement learning

- Hybrid memory architectures combining symbolic and vector databases

- Cross-model orchestration where different models handle reasoning, retrieval, and summarization

- Explainability layers that make context decisions auditable

Organizations that treat context as infrastructure—rather than an afterthought—will gain significant advantages in reliability and scalability.

Frequently Asked Questions (FAQ)

1. What is the difference between prompt engineering and context engineering?

Prompt engineering focuses on crafting specific instructions to guide model behavior. Context engineering includes prompts but also encompasses memory management, retrieval systems, safety constraints, and orchestration logic.

2. Is context engineering necessary for small AI projects?

For simple applications, minimal structure may suffice. However, as interactions become multi-step or data-driven, structured context management quickly becomes essential for accuracy and consistency.

3. How does context engineering reduce hallucinations?

By grounding model responses in verified documents and structured data, context engineering minimizes reliance on probabilistic guessing, which is often the source of hallucinations.

4. Does context engineering increase development complexity?

Initially, yes. However, dedicated software solutions and frameworks reduce long-term complexity by standardizing orchestration patterns and enabling reuse.

5. Can context engineering help lower API costs?

Yes. Efficient token management, summarization strategies, and selective retrieval reduce unnecessary context length, leading to significant cost savings.

6. Is context engineering only relevant to large enterprises?

No. Startups building AI-native products also benefit from structured interaction design. In fact, early adoption of context engineering principles can prevent costly redesigns later.

As AI systems continue to integrate into mission-critical workflows, context engineering software will play a foundational role. It transforms AI from a conversational novelty into a dependable, scalable infrastructure component—one structured interaction at a time.